Major social media platforms are retreating from protections for LGBTQ+ users and helping fuel a more hostile online environment, according to GLAAD’s annual Social Media Safety Index released last week, which found declining safety scores across nearly every major platform reviewed.

The report accuses companies, including Meta and YouTube, of weakening moderation policies, rolling back protections for transgender users, suppressing LGBTQ+ content, and failing to curb escalating anti-LGBTQ+ hate and disinformation online.

GLAAD’s report argues the changes reflect a broader shift inside major tech companies away from moderation and trust-and-safety practices; at the same time, anti-LGBTQ+ rhetoric and legislation are intensifying nationally.

“What we did not expect, however, was a steady and precipitous erosion of those scores,” GLAAD President and CEO Sarah Kate Ellis wrote in the report. “Both Meta and YouTube have implemented and sustained calculated policy changes this past year that knowingly make LGBTQ people less safe.”

The report evaluated six major platforms — TikTok, YouTube, X, Facebook, Instagram, and Threads — across 14 LGBTQ-specific indicators related to online safety, privacy, and expression. None passed.

TikTok received the highest score at 56 out of 100. Instagram scored 41, Facebook 40, Threads 39, YouTube 30, and X 29.

Related: LGBTQ+ people’s safety has plummeted across social media platforms: report

The findings mark a worsening trajectory from last year’s report, when GLAAD warned that Meta's policy changes represented an “extreme” retreat from established trust and safety standards. In the 2025 index, Instagram and Facebook each scored 45, YouTube 41, Threads 40, X 30, and TikTok 56. This year, Meta’s platforms all declined further, while YouTube suffered the steepest drop, falling 11 points after removing gender identity from its hate speech protections. TikTok was the only platform whose score did not decline.

The report sharply criticizes Meta over changes made in early 2025 to its hate speech and moderation policies. According to GLAAD, the company’s revised rules removed key protections for LGBTQ+ people, especially transgender and nonbinary users, while explicitly permitting users to characterize LGBTQ+ people as “mentally ill” or “abnormal.”

GLAAD said the changes also included removing moderation protections, ending the company’s U.S. fact-checking program, deleting trans and nonbinary Messenger themes, and dismantling diversity, equity, and inclusion initiatives.

The report also condemns Meta’s continued use of the term “transgenderism” in its Community Standards, explaining that it is a politicized anti-trans term used by right-wing activists to frame transgender identity as ideology rather than identity.

Nearly a year after Meta’s own Oversight Board recommended removing the term, the company recently stated it was still “assessing feasibility” of doing so, according to the report.

The report similarly criticizes YouTube for removing gender identity from its list of protected characteristics in hate speech policies, calling the move especially alarming amid escalating political attacks on transgender people nationwide.

Ellis said the companies’ failures extend beyond moderation decisions and into broader questions of corporate responsibility.

“Leading social media companies today do not meet basic best practices in content moderation, transparency, data privacy, and workforce diversity — and continuously refuse to meaningfully prioritize the safety, privacy, and expression of LGBTQ people and other marginalized communities,” Ellis said in a statement accompanying the report. “Advertisers should question commitments to LGBTQ safety and the disregard for the safety of LGBTQ users as they plan which platforms to continue to support.”

Ellis also addressed LGBTQ+ creators and advocates who face mounting harassment online.

Related: Trump’s FCC targets LGBTQ+ television content. GLAAD sounds alarm

“The threats in your DMs, the disinformation fueling anti-LGBTQ legislation, and the bullying that leads to real-world violence are not just ‘part of the job,’” Ellis said. “They are systemic failures that tech leaders have the tools to fix, yet they choose to profit from them instead.”

GLAAD argues that the online climate cannot be separated from the offline one.

“The political climate in the United States has shifted sharply since January 2025, with direct consequences for social media safety,” the report states. “Coordinated anti-LGBTQ campaigns have intensified, while major platforms have scaled back or eliminated safeguards designed to protect LGBTQ people online.”

Related: Rise in anti-LGBTQ+ content on Meta platforms since rollback of protections: GLAAD study

The organization pointed to more than 1,000 anti-LGBTQ+ incidents documented nationwide by GLAAD’s ALERT Desk in 2025. According to the report, anti-LGBTQ+ bias accounted for more than 20 percent of reported hate crimes in 2024 for the third consecutive year.

At the same time, GLAAD says platforms are increasingly relying on automated moderation systems and artificial intelligence tools that often fail in two directions simultaneously by allowing anti-LGBTQ+ harassment to flourish while suppressing legitimate LGBTQ+ expression.

The report cites LGBTQ-related hashtags being blocked in Meta search functions for teens, creators facing demonetization and shadowbanning, and accounts wrongly removed or restricted.

It also warns of a growing wave of AI-generated anti-LGBTQ+ deepfakes and nonconsensual imagery, which GLAAD says exposes significant gaps in platform moderation systems.

Behind many of these failures, the report argues, is a business model built to reward emotional escalation.

“Research shows that emotionally charged and divisive content, including outrage, provocation, and conflict, is particularly effective at driving engagement,” the report states. “This creates a structural incentive to allow misinformation and harmful content to circulate and spread.”

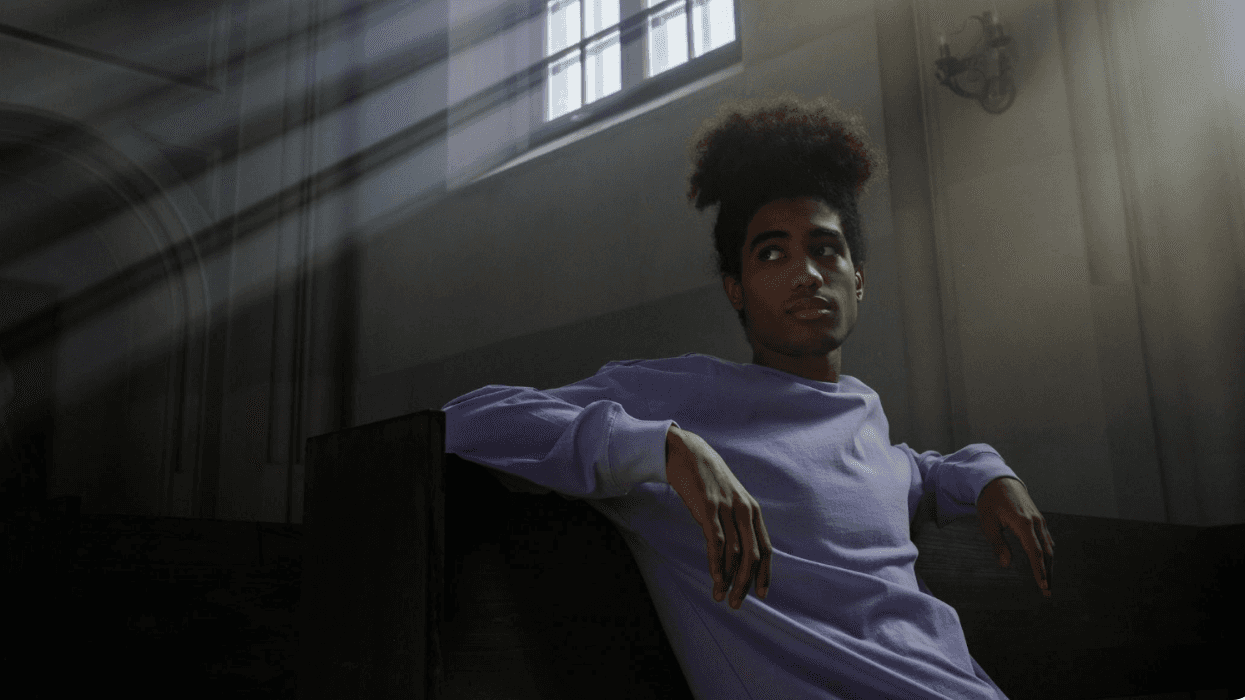

The report also highlighted widespread fear and exhaustion among LGBTQ+ users themselves. According to survey findings cited from the “Make Meta Safe” initiative, 92 percent of respondents said they were concerned that harmful content had increased since Meta’s policy rollbacks, while 77 percent said they felt less safe expressing themselves freely on Meta platforms.

GLAAD urged platforms to restore protections for LGBTQ users, improve transparency around algorithms and moderation practices, limit data collection related to sexual orientation and gender identity, and invest in trained human moderators rather than relying primarily on AI systems.

“Social media platforms are vitally important for LGBTQ people as spaces where we connect, learn, and find community,” the report states. “While there are positive initiatives these companies have implemented to help support and protect their LGBTQ users, they simply must do more.”